|

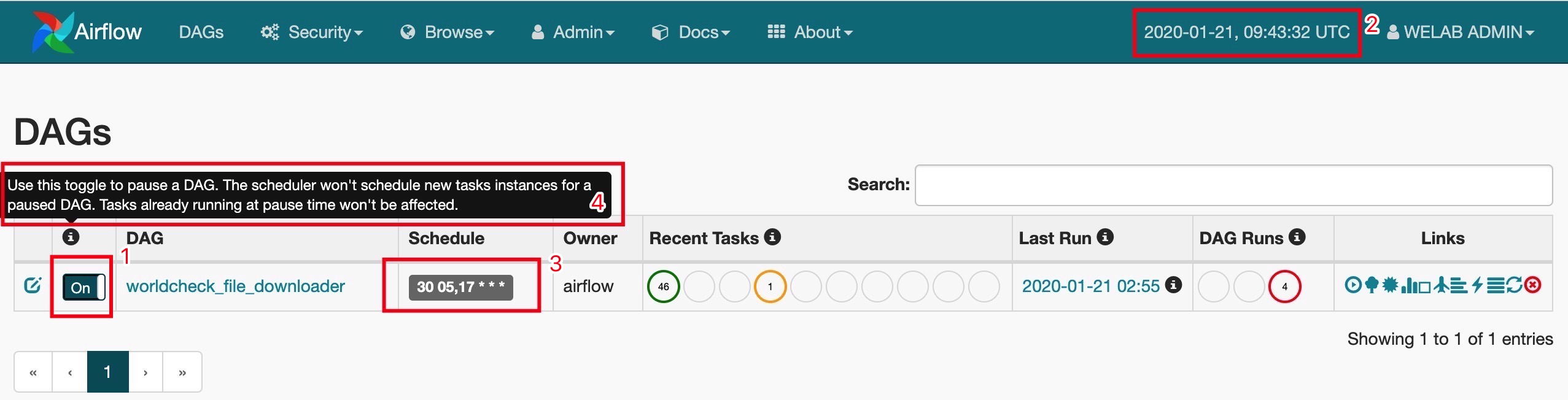

6/29/2023 0 Comments Airflow dag trigger another dag If you have to apply settings, arguments, or information to all your tasks, then a best practice and recommendation is to avoid top-level code which is not part of your DAG and set up default_args. How to write DAGs following all best practices Executor: This will trigger DAG execution for a given dependency at a. Can be hooked to the backend DB of airflow to get this info. Once the DAG is enabled we can also run the DAG. Can be automated if in the DAG doc we mention UPSTREAM DAGID & TASKID DependencyEvaluation: Will respond with the status of the dag, and dag-task pair. You will need to enable the DAG (by switching the On/Off toggle button) to be picked up by the scheduler. You should be able to trigger your DAGs at the expected time no matter which time zone is used. DependencyRuleEngine For registering a dependency. Understanding how timezones in Airflow work is important since you may want to schedule your DAGs according to your local time zone, which can lead to surprises when DST (Daylight Saving Time) happens. It is highly recommended not to change it.ĭealing with time zones, in general, can become a real nightmare if they are not set correctly. Timezones in Airflow are set up to UTC by default thus all times you observe in Airflow Web UI are in UTC. Now that you know what DAG is, let me show you how to write your first Directed Acyclic Graph following all best practices and become a true DAG master! □ The timezone in Airflow and what can go wrong with them Each DAG run in Airflow has an assigned data interval that represents the time range it operates in. However, you can trigger another (re-usable) DAG to gather such runtime metadata The Airflow task triggergetmetadatadag. The second cell prints the value of the greeting variable prefixed by hello. You probably already know what is meaning of the abbreviation DAG but let’s explain again.ĭAG (Directed Acyclic Graph) is a data pipeline that contains one or more tasks that don’t have loops between them. Create an Airflow DAG to trigger the notebook job. If you’ve previously visited our blog then you couldn’t have missed “ Apache Airflow – Start your journey as Data Engineer and Data Scientist”. The SQLite database and default configuration for your Airflow deployment are initialized in the airflow directory.What is DAG? What is the main difference between DAG and pipeline? In a production Airflow deployment, you would configure Airflow with a standard database. Initialize a SQLite database that Airflow uses to track metadata. Airflow uses the dags directory to store DAG definitions. Note that DAG Runs can also be created manually through the CLI while running an airflow triggerdag command, where you can define a specific runid. Install Airflow and the Airflow Databricks provider packages.Ĭreate an airflow/dags directory. Initialize an environment variable named AIRFLOW_HOME set to the path of the airflow directory.

This isolation helps reduce unexpected package version mismatches and code dependency collisions. We can use the BranchPythonOperator to define two code execution paths, choose the first one during regular operation, and the other path in case of an error.

Databricks recommends using a Python virtual environment to isolate package versions and code dependencies to that environment. Use pipenv to create and spawn a Python virtual environment. The standard way to trigger a DAG in response to. It allows you to have a task in a DAG that triggers another. Pipenv install apache-airflow-providers-databricksĪirflow users create -username admin -firstname -lastname -role Admin -email you copy and run the script above, you perform these steps:Ĭreate a directory named airflow and change into that directory. You can trigger a DAG manually from the Airflow UI, or by running an Airflow CLI command from gcloud. The TriggerDagRunOperator is the easiest way to implement DAG dependencies in Apache Airflow.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed